A/B Testing on Amazon: The Tests That Actually Move Revenue

INTRODUCTION — WHY MOST AMAZON BRANDS RUN TESTS THAT DON’T MATTER

Amazon brands love the idea of A/B testing.

It feels scientific.

It feels strategic.

It feels like you’re being data-driven.

It feels like you’re finally taking control of your listing performance.

So what happens?

Teams start testing:

- Bullet variations

- Title phrasing

- Description edits

- Keyword order

- Minor copy tweaks

- Brand tone adjustments

- Line breaks, commas, phrasing, and structure

And after weeks of testing?

Revenue doesn’t move.

Conversion doesn’t move.

CTR stays the same.

PPC still bleeds.

Ranking refuses to shift.

Everyone looks around confused.

“Why isn’t the test helping?”

“We changed something — why don’t we see a difference?”

“Is the experiment broken?”

“Is Amazon suppressing the change?”

“Do we need new bullets again?”

“Should we test longer?”

This is where most brands get stuck.

They’re testing , but they’re testing the wrong things.

They’re running experiments on parts of the listing that have minimal influence on revenue-driving behavior.

And the parts that

do

influence revenue?

They’re not testing those at all.

This is the foundational flaw in how most Amazon brands run experiments — they optimize the wrong assets.

This blog cuts through the noise.

It reveals exactly which A/B tests actually move revenue, why they work, how to run them properly, and how to avoid the massive time-waste that 90% of brands fall into.

Let’s get deep.

CHAPTER 1 — UNDERSTANDING WHAT A/B TESTING REALLY MEASURES ON AMAZON

Before we get into which tests matter, we need one crucial mindset shift:

A/B testing is not about “improving the listing.”

It’s about improving customer behavior.

The only behavior Amazon cares about — and the only behavior A/B testing truly affects — is:

- CTR (Click-Through Rate)

- CVR (Conversion Rate)

- Add-to-Cart Rate

- Purchase Completion Rate

- On-Page Time

- Bounce Rate

These behaviors directly influence:

- Organic rank

- PPC cost

- Profitability

- Review velocity

- Brand visibility

- Growth trajectory

But here’s the important part:

Not all listing elements influence customer behavior equally.

Some have an enormous impact.

Some have moderate impact.

Some have almost no impact at all — even if they feel important.

Understanding which is the foundation of meaningful A/B testing.

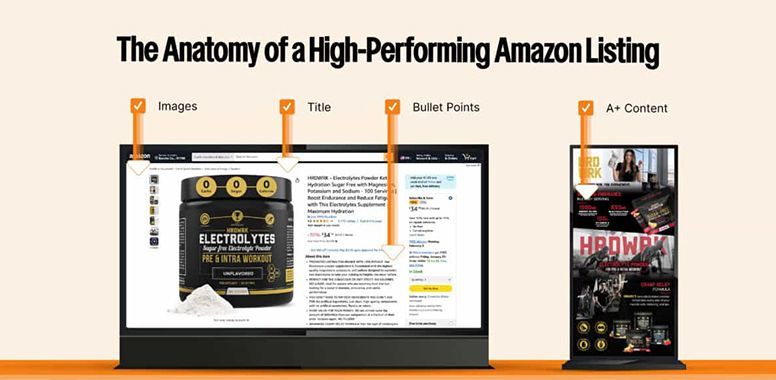

CHAPTER 2 — THE LISTING ELEMENTS WITH THE HIGHEST IMPACT

If the goal is to increase revenue, then the goal is to influence the behavior that leads to revenue.

Here are the elements that move revenue the most:

1. Main Image — Highest impact on CTR

The main image determines:

- Whether customers click your listing

- Whether your ads get cheap or expensive traffic

- Whether your organic rank improves or drops

- Whether shoppers stop scrolling

2. Price — Highest direct impact on CVR

Small price adjustments often cause massive swings in CVR.

3. Image Stack (Images #2–#7) — High impact on CVR and Bounce Rate

These images determine:

- Whether the shopper understands the product

- Whether the shopper trusts the brand

- Whether the product fits their needs

- Whether they continue scrolling

- Whether they add to cart

4. A+ Content — Moderate to high impact on CVR

A+ reinforces key benefits and reduces buyer friction.

5. Title — Moderate impact on CTR + indexing

Strong titles influence relevance and click-through.

When you rank these by actual impact, the hierarchy becomes clear:

Main Image → Price → Image Stack → A+ Content → Title → Bullets → Description → Backend Keywords

But most brands test in the opposite order:

They start with bullets — the

lowest

impact asset.

This is why testing feels pointless.

You’re testing the wrong things.

CHAPTER 3 — THE TESTS MOST BRANDS RUN THAT MOVE NOTHING

These are the tests that waste time and rarely increase revenue:

❌ Bullet rewrites

Buyers rarely read bullets unless they’re already convinced.

❌ Minor title phrasing changes

Changing “Premium Stainless Steel” to “High-Grade Stainless Steel” rarely influences CTR.

❌ Description rewrites

Description sits too low on the page to change CVR significantly.

❌ Tiny copy edits

Commas vs. dashes vs. separators don’t change behavior.

❌ Keyword rearrangements

SEO relevance rarely shifts enough to impact revenue.

❌ A/B testing packaging graphics (in images)

Unless packaging is the differentiator, it rarely impacts decisions.

❌ Rearranging bullet order

Buyers skim — order rarely sways them.

These tests feel important but rarely matter.

You might get a +1% improvement, but you won’t get the +10–30% conversion lift that real revenue-impacting tests deliver.

CHAPTER 4 — THE TESTS THAT ACTUALLY MOVE REVENUE

Here we break down the tests with the highest impact — and why they work.

TEST 1 — MAIN IMAGE TRANSFORMATION

Impact: Extremely High (CTR + Ranking + PPC Cost)

Changing the main image is the single most powerful A/B test on Amazon.

Why?

Because it influences the first — and most critical — shopper behavior:

Click or scroll.

If they don’t click → no sale.

If they do click → everything else becomes possible.

Even small changes to the main image can produce massive lifts:

- Angle changes

- Lighting improvements

- Better cropping

- Removal of dead space

- Cleaner contrast

- More vibrant product rendering

- Adding packaging (if compliant)

- Including size props

- Switching to a 3D render

- Showing accessories

- Zooming in more intelligently

A great main image test can increase CTR by:

10% – 50%+

That improvement alone can:

- Lower CPC

- Improve ad efficiency

- Boost ranking

- Increase organic traffic

- Lower TACoS

- Increase revenue

If you only run one test — it should be this one.

TEST 2 — PRICE ELASTICITY TESTS

Impact: Extremely High (CVR + Revenue Per Session)

Most brands avoid price testing out of fear.

“What if we lose sales?”

“What if customers stop buying?”

“What if we ruin our ranking?”

But price tests are incredibly powerful.

Small adjustments — even $1 to $3 — can:

- Increase conversion

- Improve perceived value

- Reduce buyer friction

- Increase total revenue

- Improve session value

- Shift your product into a more competitive zone

Example outcomes:

- Increasing price → fewer units but more profit

- Decreasing price → more units, more total revenue, better ranking

- Finding the “sweet spot” price → maximum revenue per session

Price tests are uncomfortable but essential.

TEST 3 — FULL IMAGE STACK REDESIGN

Impact: High (CVR)

Your images are your real salesperson — not your bullets.

A strong image stack:

- Builds trust

- Shows benefits

- Clarifies features

- Answers objections

- Educates the shopper

- Creates desire

- Reduces confusion

Testing new images — especially the first 3–4 — is a major CVR booster.

Key image elements to test:

- Benefit-order placement

- Infographic clarity

- How-to steps

- Lifestyle context

- Product sizing images

- Comparison charts

- Social proof badges

- Icons vs. text layouts

- Color schemes and typography

A powerful image stack test can increase CVR by:

5–30%

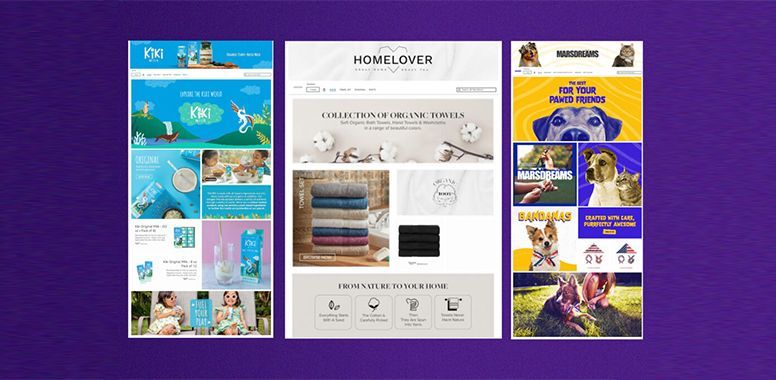

TEST 4 — A+ CONTENT REDESIGN

Impact: Moderate to High (CVR + Bounce Rate)

A+ matters more than brands realize because:

- Mobile shoppers scroll A+ quickly

- It visually completes the “story”

- It reduces friction and uncertainty

- It builds trust

- It boosts retention

When customers scroll through A+, they decide:

“Yes, I’m ready to buy,”

or

“No, I don’t trust this.”

Testing A+ variations can significantly improve the bottom of the funnel.

TEST 5 — TITLE RESTRUCTURING (NOT REWRITING)

Impact: Moderate (CTR + Relevancy)

Title tests matter only when the structure changes — not when a few words move around.

Testing:

- Keyword order

- Clarity

- Benefit-first vs. spec-first

- Adding a use-case

- Adding size or count

- Adding compatibility

These can shift CTR, especially on mobile.

CHAPTER 5 — HOW TO STRUCTURE A SUCCESSFUL A/B TEST

Running a test is not enough.

Running it correctly is the key.

Here’s the framework used by top-tier Amazon operators:

STEP 1 — Identify the behavior you want to influence

- CTR → test main image or title

- CVR → test image stack or price

- Bounce Rate → test A+ or images

- Add-to-Cart Rate → test benefits in images

- Session value → test price

You must know the goal before making a change.

STEP 2 — Track baselines for at least 14 days

Baseline metrics include:

- Sessions

- Session % (conversion)

- CTR

- Add-to-cart %

- Buy box %

- Review impact

- PPC spend

- TACoS

- Organic rank

- Impression volumes

Without a baseline, you can’t interpret the results accurately.

STEP 3 — Test ONE THING at a time

If you change:

- Images

- A+

- Bullets

- Title

…all in the same week, you have NO IDEA what caused the result.

Mini rule:

One variable = one test.

STEP 4 — Let your test run for 14–28 days

Short tests are misleading because:

- Amazon normalizes traffic

- Competitors fluctuate

- PPC auction dynamics shift

- Weekends behave differently from weekdays

You need time.

STEP 5 — Evaluate with cold data, not emotion

Ask:

- Did CTR increase?

- Did CVR increase?

- Did PPC efficiency improve?

- Did the session value increase?

- Did ACoS/TACoS drop?

- Did organic rank improve?

If the numbers say yes — keep it.

If the numbers say no — revert.

CHAPTER 6 — HOW TO AVOID THE BIGGEST TESTING MISTAKES

Most brands waste testing opportunities because they fall into one of these traps:

Mistake 1 — Testing bullets first

Bullets rarely move revenue in any meaningful way.

They are low-impact.

Mistake 2 — Testing too many variables at once

This creates confusion and useless data.

Mistake 3 — Running tests too short

Amazon needs time to normalize.

Mistake 4 — Letting emotions influence decisions

Great data doesn’t always look pretty.

Pretty images don’t always perform better.

Mistake 5 — Ignoring mobile behavior

Mobile shoppers rule Amazon.

Test your visuals with mobile screenshots, not desktop previews.

Mistake 6 — Not testing price

It is the easiest lever for improving conversion — yet the most ignored.

CHAPTER 7 — HOW A/B TESTING DIRECTLY INCREASES REVENUE

Let’s make this simple.

Every test that improves CTR or CVR increases revenue.

If CTR increases by 20%…

→ more people enter your listing

→ more conversions

→ more ranking

→ cheaper ads

→ more organic traffic

→ more revenue

If CVR increases by 10%…

→ more buyers

→ stronger ranking

→ better return on ads

→ higher session value

→ more profitability

→ brand grows faster

This is why testing matters — when you test the right things.

CHAPTER 8 — THE REVENUE-DRIVING TESTING BLUEPRINT

Here is the exact order top-performing brands use:

PHASE 1 — Fix traffic

- Main image test

- Title structure test

PHASE 2 — Fix conversion

- Price test

- Image stack overhaul

- A+ redesign

PHASE 3 — Fix retention

- Lifestyle image tests

- Social proof placement tests

PHASE 4 — Expand

- New variations

- Store testing

- Sponsored Brand creative testing

This blueprint reliably increases revenue — often dramatically.

CHAPTER 9 — A/B TESTING DONE RIGHT BECOMES A COMPETITIVE ADVANTAGE

Most brands see A/B testing as optional.

Top brands see it as a revenue engine.

When executed correctly, testing becomes:

- A ranking advantage

- A conversion advantage

- An advertising advantage

- A competitive advantage

- A long-term moat

Most brands are testing the wrong things.

If you test the right ones — consistently — you win.

CONCLUSION — A/B TESTING IS ONLY POWERFUL WHEN YOU TEST WHAT MATTERS

If you remember only one thing from this entire blog, it should be this:

A/B testing should focus on the elements that influence shopper behavior the most.

That means:

- Main image

- Price

- Image stack

- A+

- Title structure

NOT:

- Bullet formatting

- Description edits

- Keyword reshuffling

- Tiny copy changes

The tests that actually move revenue are bold.

They’re visual.

They’re behavioral.

They’re meaningful.

When you test the right levers, Amazon becomes predictable.

Conversion improves.

Ranking improves.

Advertising becomes cheaper.

Revenue grows.

Profit grows.

The entire flywheel starts spinning faster.

A/B testing is not about tweaking.

It’s about transforming.

William Fikhman is the founder of Chief Marketplace Officer (CMO), a fractional Amazon executive agency based in Los Angeles, California. He began selling on Amazon in 2009, scaling to $5M in year one and $20M+ within two years. Over 16 years, William has managed Amazon operations for more than 100 consumer brands, overseeing $300M+ in marketplace revenue across Seller Central and Vendor Central. He founded CMO to give consumer brands access to senior-level Amazon leadership on a fractional basis — without the cost of a full-time hire or the limitations of a traditional agency. William specializes in brand protection, distribution control, Amazon PPC strategy, and marketplace operations.

Connect on LinkedIn

|

Book a consultation

Recent Posts